Table of Contents

We Helped Train AI That Powers Vibe Coding. Here's How It Works.

AI-powered coding assistants are everywhere now. “Vibe coding,” a term popularised by Andrej Karpathy, is the new norm for developers. Instead of writing every function from scratch, developers now collaborate with AI assistants that autocomplete functions, write boilerplate, and even solve complex problems with just a few prompt lines. Developers sketch intent and let AI fill in the syntax. This shift has changed not just how developers work, but what skills matter most.

AI tools like GitHub Copilot, Cursor and V0 are pushing this wave forward. But the real genius isn’t just in the size or speed of these models; it lies in how well they’ve been trained to understand intent, context, and quality. And that’s where human feedback steps in.

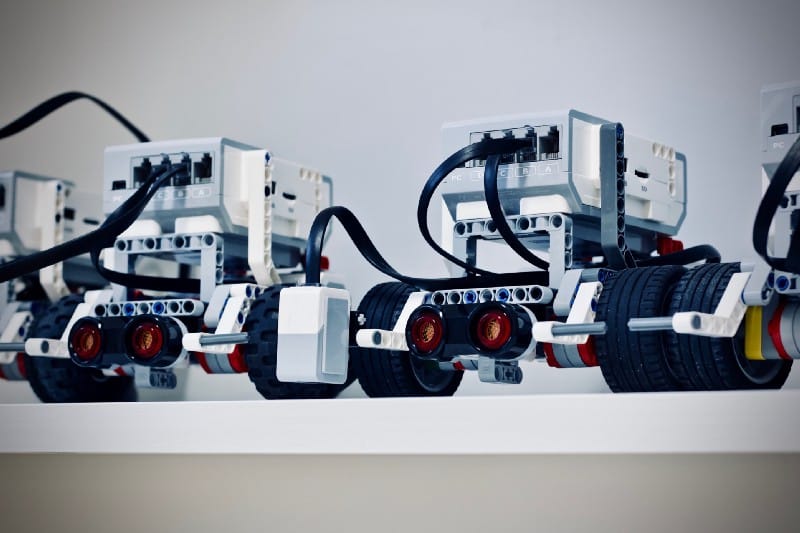

At Biz-Tech Analytics , we’ve been at the core of this transformation, supporting some of the world’s top AI companies by supplying expert evaluations from our team of 350+ developers and detailed feedback required to fine-tune large language models (LLMs) for code generation and train LLM systems for production. We also bring in complementary capabilities in text annotation, image annotation, video annotation and synthetic data generation to build high-performing models.

Where Coding AI Tools Fall Short

Despite impressive advancements, current coding AI platforms still face critical limitations:

- Under-specification Sensitivity: Vague prompts often yield incomplete or misaligned outputs. For instance, simple UI requests can trigger verbose, over-engineered responses that miss the functional intent.

- Trained on Flawed Public Data: Most models are trained on publicly available code from forums, open-source repositories, and Q&A sites. While abundant, this data often includes outdated, non-idiomatic, or even insecure code. As a result, AI outputs can mirror poor practices and technical debt, especially when asked to generate solutions for nuanced or modern development problems.

- Shallow Contextual Reasoning: Models tend to interpret prompts at surface level. They lack awareness of architectural constraints, edge cases, or long-term maintainability.

- Inconsistency Across Complexity Levels: While tools like V0 handle boilerplate well, they struggle with high-complexity use cases, particularly those involving multiple state transitions or dynamic data sources.

- Poor Long-Term Memory: These models struggle to retain context over extended periods of interaction. For example, multi-step coding sessions involving interconnected files or iterative feedback loops often break down because the AI forgets previous inputs or decisions.

Even top-tier LLMs like GPT-4 or Claude struggle with correctness beyond syntax, adhering to task-specific tradeoffs like performance vs readability, and modelling team-specific coding conventions or architectural preferences. They produce technically correct but overly verbose or inefficient solutions and lack an understanding of edge cases or context-specific requirements.

Through some recent projects for global technology companies we contributed to, we observed these challenges firsthand. While the AI could generate scaffolding, the real value emerged from what happened after: human-led refinement, debugging, and deployment.

How Top Global AI Labs Are Making These Coding Models

Critique aside, ever wonder how the big players actually train their coding models? It turns out there is no single recipe, just a handful of powerful strategies that have emerged over time. Based on what we’ve seen (and helped build), the most effective labs rely on three core approaches to create smarter, more context-aware developer tools:

1. Human Labelled Synthetic Data Generation

In this approach, AI models are trained on a curated dataset where both the prompts and the correct outputs are explicitly crafted and verified by experts. This technique provides the AI with clear examples of what “right” looks like, not just in terms of code correctness, but clarity, structure, and completeness.

Take, for instance, in a recent large-scale effort we contributed to, a cross-functional team aimed to turn natural language prompts into fully functional, production-grade applications with developers stepping in to take it to the finish line.

The team conducted code reviews, fixed logic bugs, added missing features, improved responsiveness, and deployed the applications live. Each prompt ultimately resulted in a working, hosted project with full documentation, all of which created high-quality, structured training data. Challenges such as incomplete functionality or poor styling emphasized the AI’s current gaps, reinforcing the importance of expert-human refinement. Yet the hybrid approach demonstrated that synthetic data generation, paired with experienced developer oversight, offers a scalable, repeatable way to train robust coding AI.

2. Reinforcement Learning From Human Feedback (RLHF)

RLHF enhances models by incorporating direct feedback from expert evaluators who assess and rank AI-generated outputs. Unlike hard labels, this method captures nuance: how readable, efficient, or idiomatic the code is, or how well it aligns with the developer’s intent.

In a recent RLHF project spanning 25 programming languages, our evaluators were tasked with reviewing AI-generated coding solutions under tight deadlines. They didn’t just glance over code; they built test cases, validated logic, annotated issues, and documented the entire debugging process. Every piece of feedback had to follow a structured taxonomy, ensuring consistency across languages and tasks.

The result did not just consist of objective feedback, but a rich dataset of subjective preference signals that helped fine-tune the model’s reward systems. This approach enabled faster iteration cycles, improved reliability, and better generalization across a wide spectrum of developer use cases.

3. Model Evaluation

By running the same prompt through different human reviewers or benchmarking tasks, researchers can assess how the model performs across varied scenarios. These evaluations become signals that help refine the model’s responses, prioritize edge cases, and surface failure modes.

Model evaluation doesn’t train the AI directly, but it creates the scorecard that future iterations are optimized against.

Let’s consider the use case of developers at different stages of expertise valuing different things. A junior engineer might want more guidance and explanation, while a senior might prioritize brevity, precision, or architectural cleanliness. Instead of treating all users the same, this method evaluates AI responses based on how helpful they are to people at different levels.

In practice, this meant asking evaluators to rate model outputs as if they were users themselves. Batches of prompt-response pairs are being reviewed weekly, with labelers disclosing their level of experience before providing feedback. Their assessments focused on clarity, helpfulness, and overall user satisfaction, not just technical correctness.

This user-centered approach created insights into how different types of developers perceive AI assistance, allowing the model to adapt its tone, depth, and structure accordingly.

Together, these three strategies form a powerful triad. Synthetic data generation with human annotation ensures cleaner input-output pairs. RLHF builds models that understand nuanced preferences. Multi-level evaluation personalizes outputs by aligning them with user expectations. In tandem, these approaches define the architecture of developer-aligned AI systems.

What’s Next: Smarter, Sharper, More Personal

We’re slowly moving from assistants that generate code to partners that understand how you build. And while the black box may never go away entirely, we’re learning how to peek inside.

Looking ahead, the next generation of coding AI will likely focus on three things:

- Personalization by default: Tools that adapt to your skill level, your stack, your habits; not a generic developer profile.

- Debugging the model itself: Not just debugging your code, but letting you ask “why did you write this?” and get an interpretable answer.

- Constant feedback loops: From GitHub issues to Slack conversations, AI tools will increasingly learn from the environments they live in. Think GitHub issues, pull request comments, Slack threads, code reviews, even inline suggestions that developers accept or reject. Every interaction, explicit or subtle, becomes a form of feedback.

And above all, humans will still be essential. Behind every useful coding suggestion is a dataset someone curated, a label someone applied, and a decision someone judged. Training these models is still a deeply human process. And that’s not changing anytime soon.

At Biz-Tech Analytics , we’re proud to be part of this invisible but essential layer powering the next generation of developer tools. And we’re just getting started!

Want to learn more about our work training and evaluating AI for code generation?

Reach out to us today!